The issue

When migrating the solution I’m currently working on to Azure eight months ago I was initially looking at transferring the SSIS packages that performed the ETL. We were going with a Managed Instance rather than SQL Server on Azure VM (Virtual Machine). This was the recommendation from Microsoft consultants when I spoke with them. Firstly it’s significantly cheaper, while giving us similar functionality to an on-premise SQL instance. Secondly, it makes use of more Azure capability (scaling, etc.) rather than just dumping your existing on-premise solution into Azure as-is.

As we wouldn’t have a VM to install SSIS on my plan was to set up the SSIS integration runtime in Azure Data Factory (ADF). Then perform the necessary changes to the packages for the transfer from on-premise to Azure. It was the obvious choice given the large amount of packages we had, as well as the fact that we didn’t have any experience with ADF.

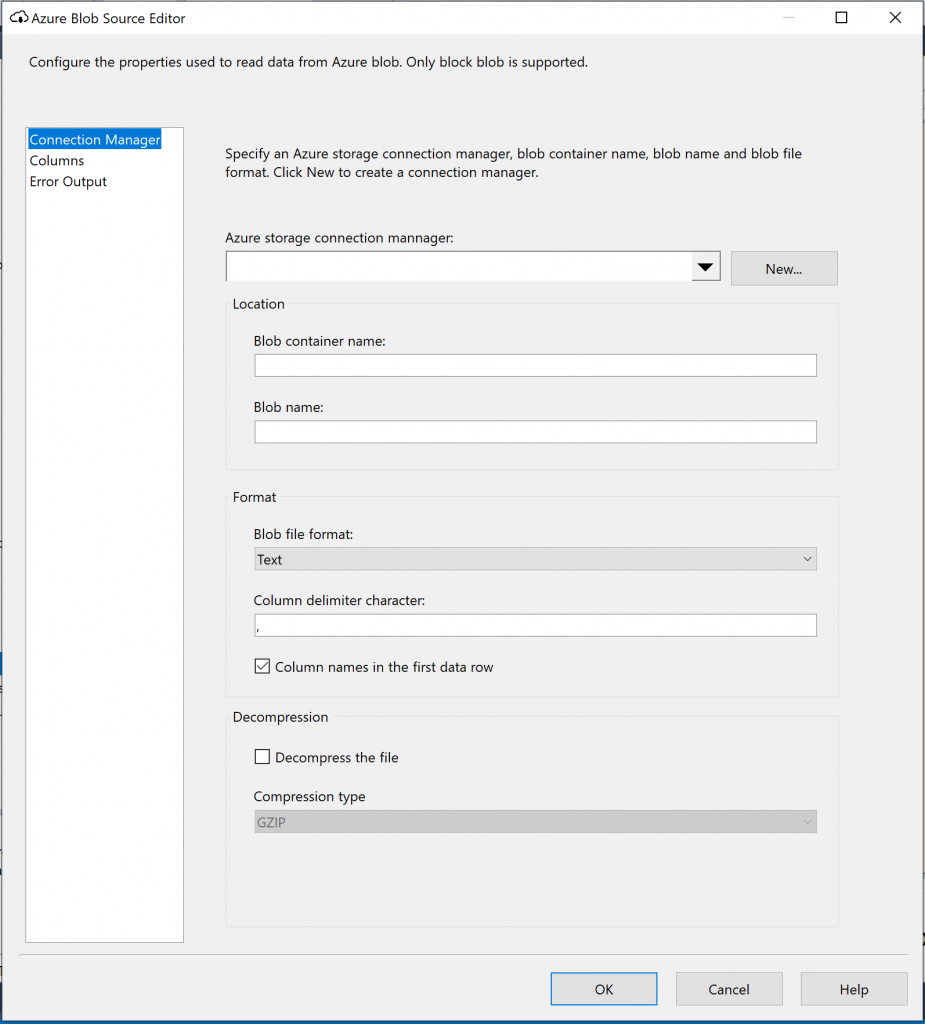

The bulk of our source data is received in text qualified CSV files. This is something that the flat file source and destinations in SSIS have no issue with. With the system moving to Azure however these files were going to land in Blob storage, and as you’ve probably guessed from the title of this post, the Azure Blob Source component in SSIS does not have the option to select a text qualifier.

The “Work Around”

I honestly couldn’t believe it didn’t have it. It’s something so obviously necessary in a huge amount of cases. I searched and searched, and eventually I did find something. A work around. Of sorts.

Microsoft’s suggestion is to use the Blob Download task to transfer the files to the file system of the VM that gets created as part of the SSIS integration runtime install. A bit of background here is that SSIS needs to run on a VM in Azure (which also means paying for the cost of the VM). From there they suggest using a flat file source to pick the files up from the VM. For me it’s a bit of a clunky solution. Even more so when you consider you can’t access that VM. To find where you can download the files to you have to go around the houses to find the folder structures for yourself as per the suggestion from Microsoft below.

http://microsoft-ssis.blogspot.com/2018/03/azure-blob-source-flat-file-source.html

My solution

I am dealing with a large number of files, some of which are tens of Gb in size. Add to this the fact that the data is also sensitive. So I didn’t really want it to be sitting on a drive that I wasn’t in control of, even if it’s presence there was transient. Also for me, the migration of the solution to Azure should enhance the solution. It shouldn’t require me to stick in what I think is a pretty ugly workaround on day one. Oh no. So I started looking at alternative options for the load, and I’m really glad that I did.

After a few days of testing I took the decision to migrate our ETL to ADF and I couldn’t be happier about the decision. Initially it got us over the text qualifier issue but while investigating that I also found out about some really useful features in ADF that not only cut the effort required for the migration significantly, but also that of the ongoing development of the solution.

If you are interested in a more detailed look at some of the great features available in ADF then please check out my videos on ADF.